Is physics “lost in math”?

In a provocative new book, Lost in Math: How Beauty Leads Physics Astray, quantum physicist Sabine Hossenfelder argues that the scientific world in general, and the field of physics in particular, has repeatedly clung to notions that have been rejected by experimental evidence, or has pursued theories far beyond what can be tested by experimentation, mainly because these theories and the mathematics behind them were judged “too beautiful not to be true.” Examples cited by Hossenfelder include:

- Supersymmetry. Supersymmetry, the notion that each particle has a “superpartner,” was originally proposed in the 1970s, and more recently was proposed to explain why, for example, the Higgs boson has the mass it has. But no such superparticles have been found, even in the latest experiments on the Large Hadron Collider. Undeterred, many physicists just “know” it must be true.

- String theory. String theory has been proposed as the long-sought “theory of everything” uniting relativity and quantum theory, mostly because it is so “beautiful.” But in spite of decades of effort, by literally thousands of brilliant mathematical physicists, no experimentally testable prediction or test has been produced. What’s more, hopes that string theory would result in a single crisp physical theory, pinning down unique laws and unique values of the fundamental constants, have been dashed. Instead the theory admits a huge number of different options, by one reckoning 10500 in number.

- Multiverse. Attempts to explain the apparent fine tuning of the laws and constants of physics have led many physicists to propose a “multiverse,” namely a huge collection of other universes (see previous item, for instance), and to explain fine tuning by saying, in effect, that if our particular universe were not fine-tuned pretty much as we see it, we could not exist and thus would not be here to talk about it; end of story. But other researchers consider this “anthropic principle” argument highly controversial, an abdication of empirically testable physics.

Hossenfelder is hardly alone in expressing concern. For example, physicist Lee Smolin, writing in his 2006 book The Trouble With Physics, declared, “A scientific theory [string theory/multiverse] that makes no predictions and therefore is not subject to experiment can never fail, but such a theory can never succeed either, as long as science stands for knowledge gained from rational argument borne out by evidence.” Similarly, John Horgan wrote in his book The End of Science (see also this Scientific American article), “The persistence of such highly speculative theories … suggests that physics is crashing into insurmountable limits.”

Is economics “lost in math”?

Paul Krugman (credit: MIT)

In 2009, Nobel-Prize-winning economist Paul Krugman wrote a lengthy post-mortem of the financial crisis in the New York Times, entitled How Did Economists Get It So Wrong?. In the opening section of his essay, “I. Mistaking Beauty for Truth,” Krugman wrote, “It’s hard to believe now, but not long ago economists were congratulating themselves over the success of their field.” Theoretical economists thought that they had resolved internal disputes, and were now in agreement that the state of the field was good. In 2004, Ben Bernanke, formerly chairman of the Federal Reserve Board, celebrated the “Great Moderation in economic performance” over the period 1985-2004, which he attributed to “improved economic policy making.”

But in 2008, as Krugman noted, “everything came apart.” Krugman elaborated as follows:

As I see it, the economics profession went astray because economists, as a group, mistook beauty, clad in impressive-looking mathematics, for truth. Until the Great Depression, most economists clung to a vision of capitalism as a perfect or nearly perfect system. That vision wasn’t sustainable in the face of mass unemployment, but as memories of the Depression faded, economists fell back in love with the old, idealized vision of an economy in which rational individuals interact in perfect markets, this time gussied up with fancy equations. …

Unfortunately, this romanticized and sanitized vision of the economy led most economists to ignore all the things that can go wrong. They turned a blind eye to the limitations of human rationality that often lead to bubbles and busts; to the problems of institutions that run amok; to the imperfections of markets — especially financial markets — that can cause the economy’s operating system to undergo sudden, unpredictable crashes; and to the dangers created when regulators don’t believe in regulation.

Krugman’s essay was written in 2009. More recently, Bloomberg columnist Mohamed El-Erian argued that the discipline of economics “is divorced from real-world relevance and has lost credibility.” Among the problems he mentions currently afflicting the field are the following:

- The proliferation of simplifying assumptions that lead to an “overreliance on excessively abstract estimation techniques and approaches.”

- Insufficient consideration of the possibility that financial dislocations can disrupt the economy.

- Poor and grudging adoption of important insights from behavioral science and other disciplines.

- An oversimplification of uncertainty.

- An overemphasis of equilibrium conditions and mean reversion, and an underemphasis on structural changes and tipping points.

Is finance “lost in math”?

Readers of our earlier blogs (see, for example, A and B, and this Forbes interview) will quickly recognize that the field of finance is afflicted with a very similar set of ills:

- An overreliance on theoretical models that yield impressive-looking academic papers and pretty mathematics, but which all too often do not work in the real world.

- Financial strategies based on Gaussian normal distributions, which lead to pretty mathematical models, but which may fail, sometimes disastrously, in a real world that does not necessarily obey Gaussian normal distributions.

- A reluctance to incorporate techniques and methodologies from other fields, including rigorous statistical methods, large-scale data analysis, machine learning and high-performance computing.

- Rampant backtest overfitting, much of it rooted in using computer programs to explore millions of alternate configurations of model parameters, yet failing to disclose these explorations either in published journals or to prospective customers.

- A reluctance to recognize that many financial strategies have run their course and are no longer yielding statistically significant above-market returns (if they ever did!).

Many of these difficulties are rooted in the failure to employ, or even to fully appreciate the need to employ, the full power of modern rigorous statistical analysis, or, more generally, to incorporate rigorous standards of reproducibility that have already been adopted in most other fields of pure and applied science. The results of these lapses are entirely predictable: investment strategies that look great on paper, but which produce disappointing or even disastrous results in practice.

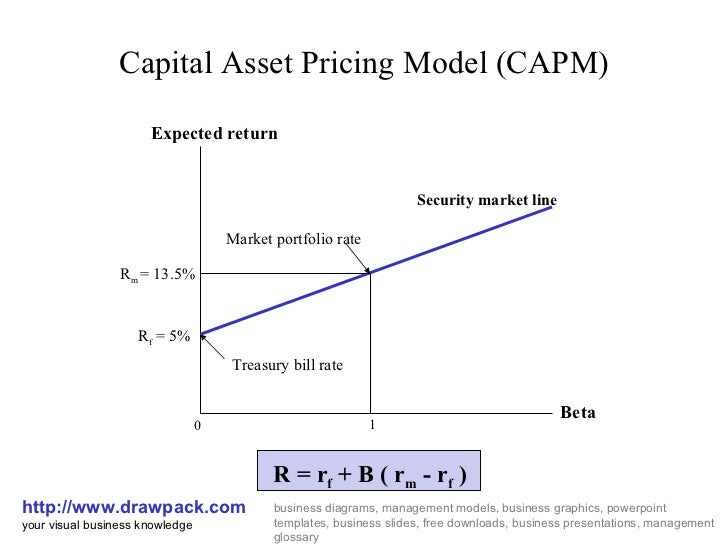

Consider, as a single example, the Capital Asset Pricing model (CAPM), the bedrock of financial economics. It was developed by William Sharpe, a Nobel laureate, and others back in the 1960s. Even today, students around the world are still taught that the return of a security is a linear function of the risk-free rate and the “risk premium.” That’s it: your formula to riches — everything can be condensed in a simple formula that can be estimated with 18th century mathematics. Since its original publication, this formula has been presented in virtually every finance textbook, and is the foundation of the “factor investing” and “smart beta” movements in particular.

So could things really be that simple? Financial markets today are incredibly complex systems, where millions of individuals interact with each other exchanging information asynchronously and asymmetrically. More importantly, present-day financial markets are dominated by large financial operators, many of which have incorporated sophisticated quantitative mathematical techniques and algorithms, together with real-time access to large-scale datasets. Is the 60-year-old CAPM model an accurate reflection of today’s highly computerized markets? Obviously not.

Nor is this crisis limited to the world of academic finance. In an earlier blog, we listed by name, with web references, numerous financial news sources and financial providers, including a number of major banks and brokerages, that promote untested, unproven, and, in many cases, utterly pseudoscientific strategies that may have a patina of impressive-looking mathematics but which are utterly ineffective in today’s market: eyeballing charts and graphs, “technical analysis,” “waves,” “Fibonacci ratios,” and more. These news sources, banks and brokerage houses thus lure investors into wasteful, costly, unscientific and potentially disastrous frequent trading, while offering guidance that has no objective information value. Given the scientific bankruptcy of the underlying claims, in our view this looms as a scandal of staggering proportions.

Bringing economics and finance into 21st century empirical science

It is clear that for economics and finance to move forward to reclaim their status as 21st century empirical sciences, they must let go of those models and techniques that may be mathematically “beautiful” but which demonstrably no longer apply in the real world. Instead, they need to move forward to more fully embrace state-of-the-art “big data” empirical science:

- Technology to collect and maintain very large datasets.

- Discipline-specific datasets and data-handing technology.

- State-of-the-art data analytics.

- State-of-the-art machine learning techniques to automatically extract useful information from data.

- High-performance computing technology, using many processors and memory units working in tandem in a high-performance network, to magnify the available computing power.

There are encouraging signs that this transformation is taking place. Thus there is hope for a future of more rational and empirically-based foundations for these disciplines.

Additional information on big data in finance is given in a previous blog How is big data impacting the finance world?. Many of these themes are elaborated in greater detail in a new book Advances in Financial Machine Learning.